_____________________

It’s smartoctober! Every year we spend a month deep diving into a subject or skill set that we deem to be absolutely essential to newsrooms around the world. This year it’s time to consider AI.

_____________________

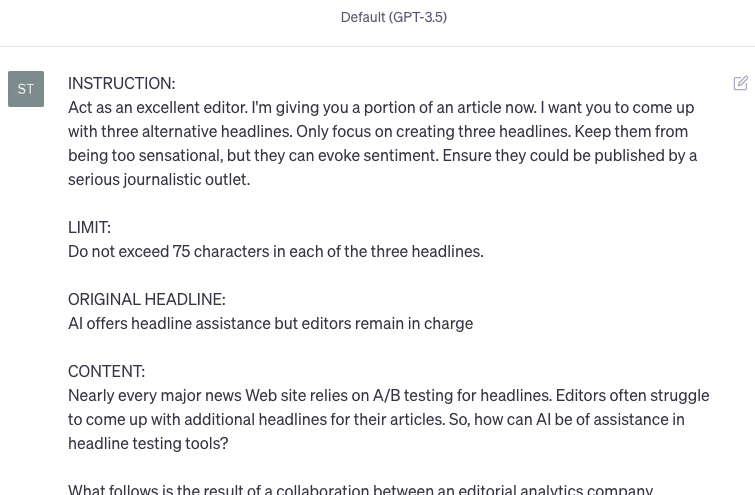

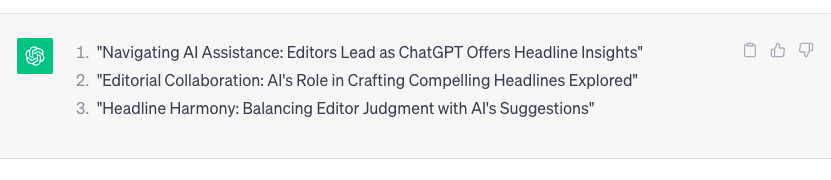

Right now, there are tools and features available to editors and journalists which - rather than pitching, researching, writing and publishing Nobel-winning features from scratch - will help refine your output. They are best viewed as you’d consider automatic headline suggestions in your CMS: a useful, time saving device of considerable value for creating a headline, but of little use for anything else.

So, rather than think about what’s next for AI and where all this is going, this week we’re considering what’s available now.

Three easy ways to start incorporating AI into your newsroom, without disrupting your workflow

- Automated user needs analysis

WHAT IT IS

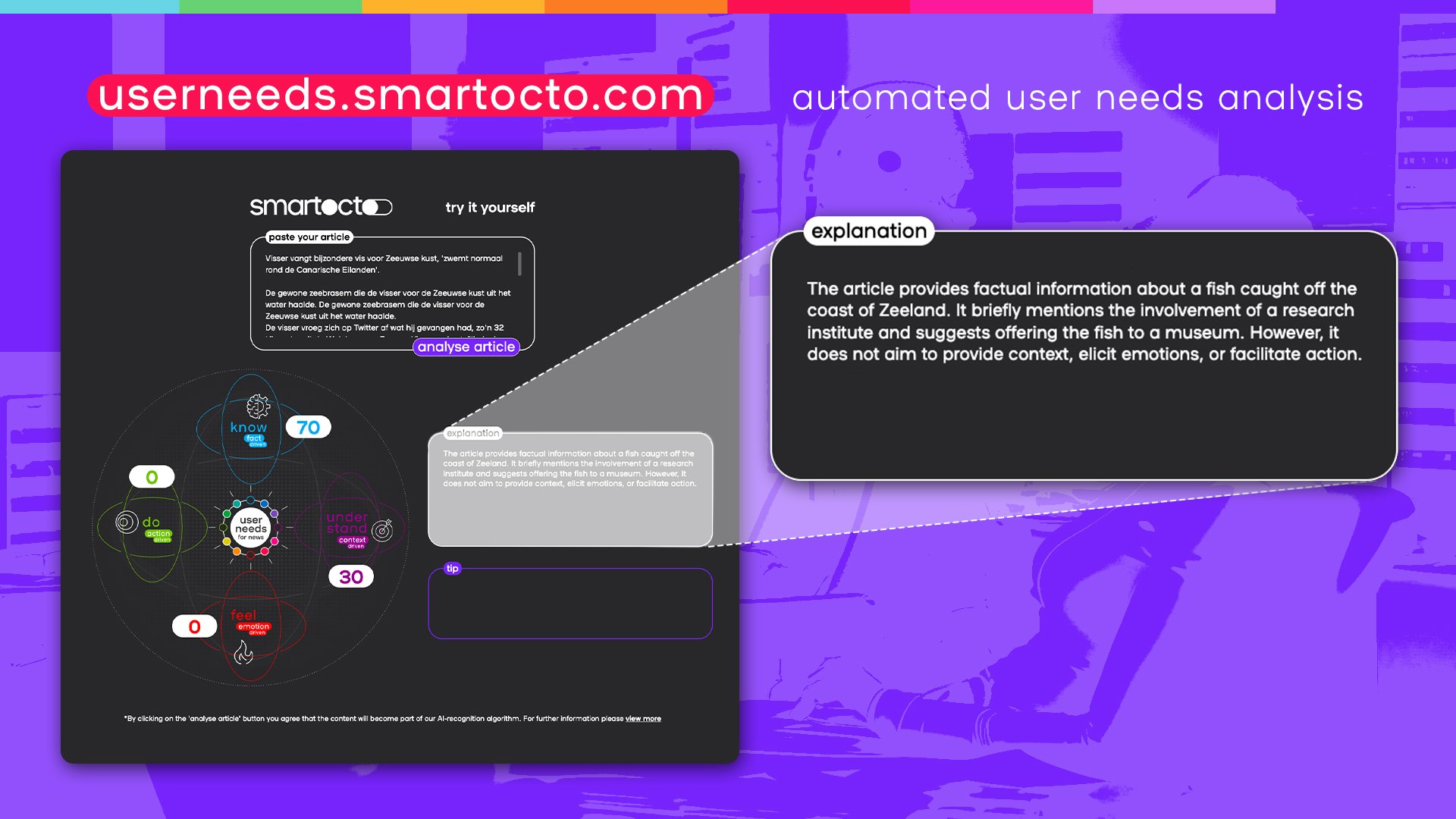

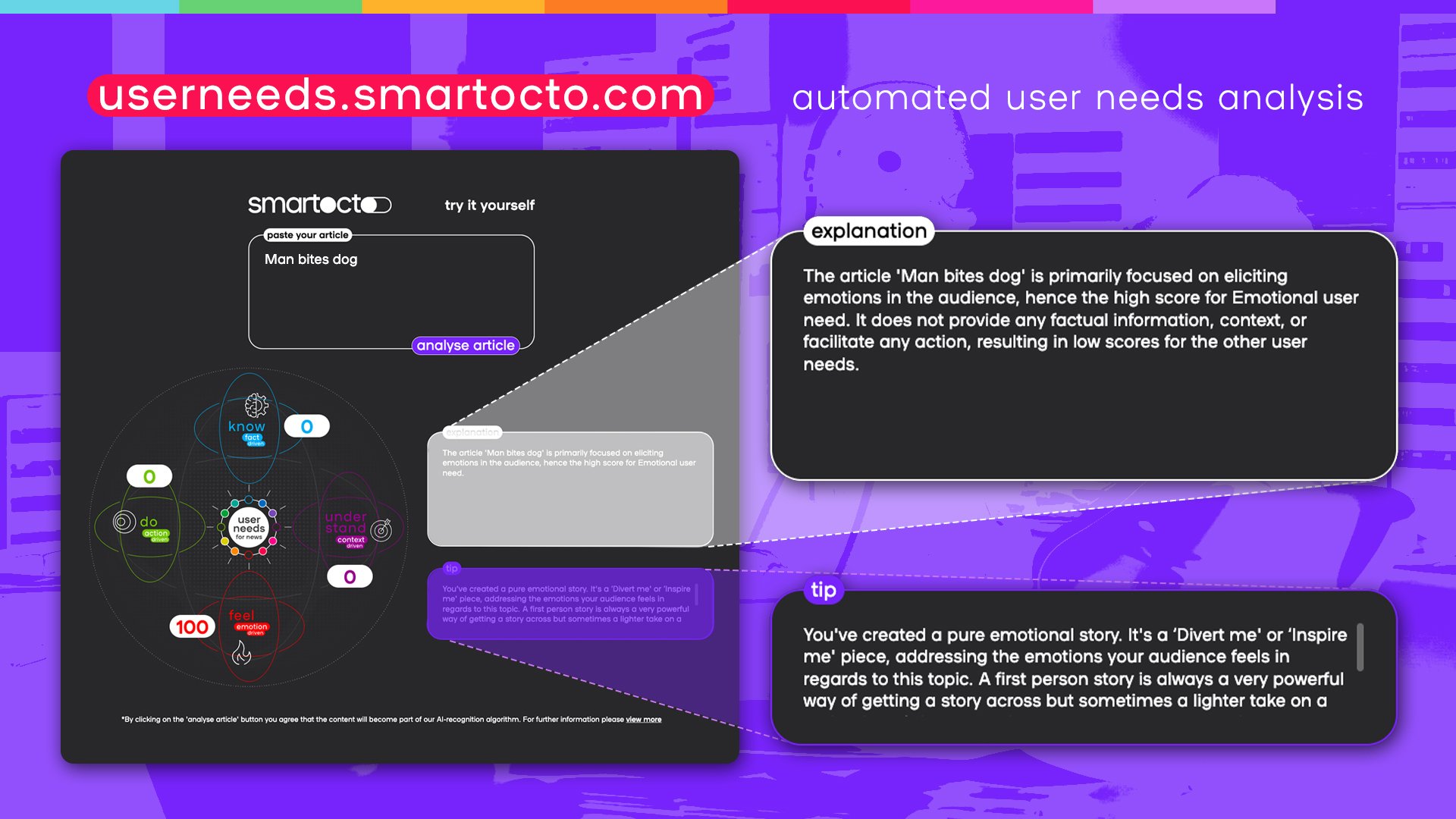

We’re excited about this one. Currently in beta, the user needs analysis tool allows you to paste in the text of any article and then our algorithmic wizards in the code will analyse it to reveal which user need(s) it covers.

WHY IT’S USEFUL

When you’re starting off with user needs, it can be difficult to ascertain which articles address which user needs. This takes the labour out of that process and allows you to see quickly where your articles sit in the matrix of four core ‘drivers’.

But it’s not just illustrative. It’s actionable too. While it reveals which driver or user needs are addressed, there are also tips on where articles fall short, meaning that you can ensure that what you think you’re producing is what’s actually being delivered.

Automated user needs analysis in journalism is pivotal for several reasons, Goran Milovanovic, senior data scientist at smartocto, explains. "Firstly, it significantly enhances efficiency and responsiveness by swiftly providing feedback on user needs in particular articles. This agility ensures that news organisations can adapt to rapidly changing audience preferences. Secondly, it enables targeted content delivery, enhancing user engagement and loyalty. Automated analysis also empowers newsrooms with data-driven insights, guiding editorial decisions to produce higher-quality content. Lastly, this approach fosters a continuous feedback loop, facilitating ongoing improvement in content relevance and accountability.”

Rutger Verhoeven, cofounder and CMO at smartocto, thinks this tool could be crucial for optimising your content strategy. "Because this technology gives you instant feedback about which journalistic angle your article was written from, you immediately learn where optimisations can be made. If you wanted to write a contextual Educate me piece, but the system returns that it has mainly become a factual representation, you can specifically search for places where the story can be sharper, better and more attractive to your audience.”